Speakers announced! The time is now to join us in DC on May 14th for Offset '26. Get Tickets!

Develop. Deploy. Defend.

The 2F Suite simplifies and accelerates every step of the software development and delivery process, including Day 2 operations and extensibility.

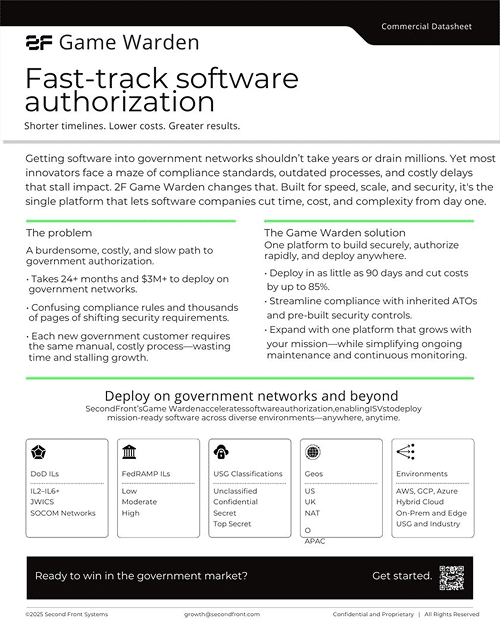

Game Warden product overview

See how you can rapidly onboard, host and deploy applications to government networks.

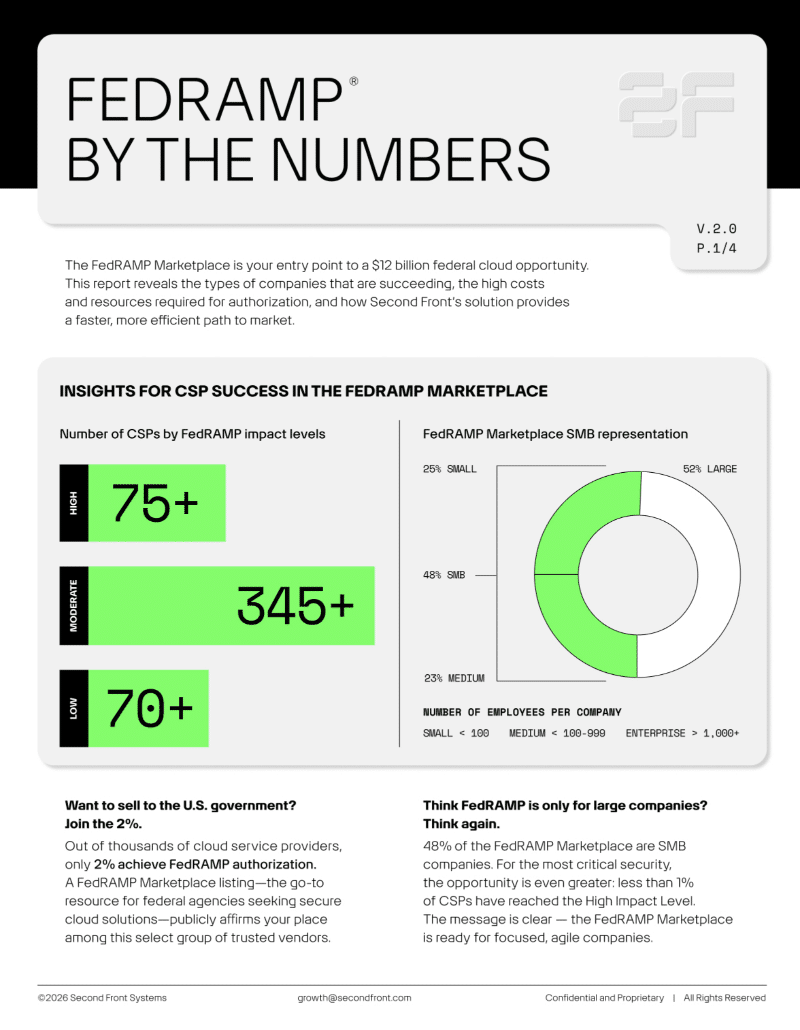

FedRAMP by the numbers

Unlock exclusive access to our FedRAMP By the Numbers Infographic—your front-row pass to a $12 billion federal cloud market opportunity!

Trusted. Proven. Relentless.

Leading software providers and government agencies around the world trust us to deliver secure technology.

2F Game Warden is FedRAMP High authorized

With 2F Game Warden for FedRAMP, deliver your cloud service to federal civilian agencies faster—accelerating authorization and opening federal market access.

Solutions that empower and transform.

Whether delivering software to the public sector for the first time or needing a hand navigating the complex accreditation process, 2F is your one-stop shop.

For Government

For International

Integrate fast tracks IL6 accreditation

See how Second Front helped Integrate fast-track IL6 accreditation and deploy to a classified environment in under 12 months—paving the way for a $25M Phase III SBIR award.

Sustainment earns DoD accreditation in 58 Days

See how Sustainment leveraged 2F Game Warden to deploy the Air Force at the speed of relevance.

Your command center for knowledge and innovation.

Strategic insights, mission-ready resources, and frontline expertise—all in one place.